Almost a year ago today I was in a meeting where someone was telling me that the project we were working on needed to use LoRa positioning so that diggers and trucks could be tracked on a real-time map. I am not at liberty to give you my full response, but my professional response is that the technology is not mature enough for that purpose.

A few days later, I had a meeting with Intel (yes, those guys that do computer chips), and we were discussing facial recognition and how it has been used to “locate” people. But, it became apparent that the “locate” referred to where the camera was situated and not the real location or where that person may be going. It got me thinking and researching, pondering how you could capture the object and track its progress in map space.

A short while later, at the Esri UK conference in London, I am sitting among a few thousand other geo nerds watching a toy train going around a track. As it goes around, it detects a toy and puts it on a map. And for me, the ball drops—I’d found the final piece of the puzzle. Though it wasn’t until I met with a couple of developers at the Esri User Conference last year that the method I had considered was confirmed.

Using object detection, it is possible to identify objects; for example, you could easily identify different types of trains and equipment moving around a train yard. If you placed a tag or unique sticker on each (or a name badge), you could identify each train; you could even have the system tell you when each appeared within the view of the camera. By showing the machine learning (ML) algorithm many images of objects from different angles, it can “learn” what objects are with a high level of accuracy and identify them.

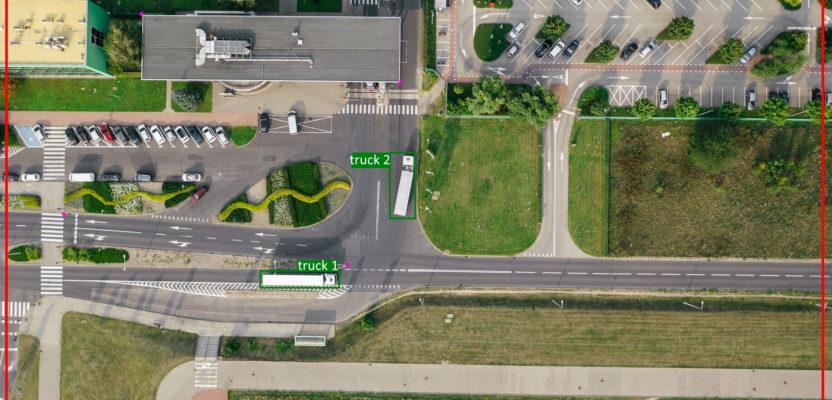

Mapping the objects, converting real-world space to map space, is a trickier problem. The easiest solution is to have a camera that is mounted above the area you want to track; the extents of the cameras’ view can then be mapped to map space and each pixel is given a coordinate. Using a 4k camera mounted 100m above an area of interest could quite easily give you around less than 1m per pixel (dependent on field of view). This is better than you would get from a GPS unit (depending on hardware and coordinate systems, etc.).

In the image above, you can see the extent within the camera view denoted by the red boundary, the pink dots which represent the georeferenced points, and that the trucks have been detected (green).

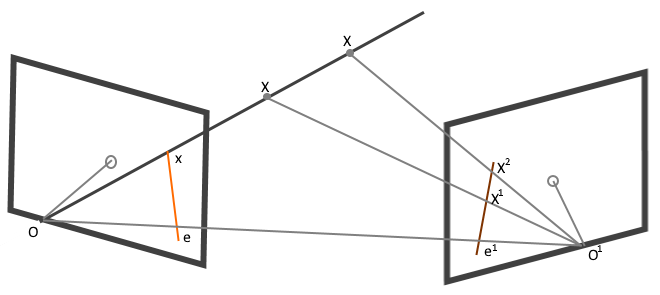

What I discovered at the Esri User Conference was a method for capturing within the 3D space into 3D mapping space. The principle method is by using stereo cameras, much like photogrammetry, whereby you can use the multiple cameras to map depth as well as the horizontal and vertical axis to true map space. Although I didn’t quite get the full math behind this, it uses a method called epipolar geometry, which means that if we can find consistent points between the two images, we can then map out the coordinates of that space or find where objects are within that space (using xyz coordinates).

Using the image above, we can see that if we only look along line OX, we can’t see where along the line the point falls, but using the right image we can by using OO1X. Luckily for you, I don’t need to get too involved in the math as much of the math is integrated into solutions such as OpenCV (as in Open Computer Vision), Tensor flow, and a few scripts that can be found on GitHub.

By using this method, rather than mapping to 2D map space we can map to 3D map space and understand better when the object is moving nearer or farther away from the camera. Also, the side profile of objects are normally easier as points of reference, but the great thing here is that if you use a UHD or 4k stereo camera set up, you could map at better than GPS accuracy.

Let me clarify as to what this provides: objects mapped automatically when they come into the camera extent and then mapped at periods which you dictate at a high level of accuracy.

The potential of this technology is immense and could save and help many lives if used in the right way. Some of the uses could include:

- Traffic incidence response – By detecting crashes or fire, map and record, then notify the emergency services

- Wildfire monitoring – Similar to the current method of image classification and AI but using cameras mounted around know areas of issue

- Industrial sites – Monitoring plant & equipment for health and safety/ insurance purposes & checking locations against plan

- Monitoring of sensitive locations such as protected birds/animal grounds

Far from being a great idea that I had, it is already being used in some applications, like Mapillary, which Marc Delgado covered recently, and the UK Metropolitan Police are trialing a facial recognition version.

Although it could feel like big brother is watching you, it could also provide you with real-time traffic, cheaper housing, and a more efficient workforce. It’s a chance I’m willing to take.